The illustrations have really exceeded our expectations. We love how they turned out! They are going to be very impactful in terms of how people engage with the app. The team was such a pleasure to work with - such great attention to detail through the whole process.

AI Illustration at Scale: Architecting a Pipeline That Replaced Months of Traditional Commissioning

Yangra needed 200+ custom illustrations to bring their app to life - a volume that would typically take months of commissioning. Whitespectre created a multi-agent AI illustration pipeline that produces on-brand artwork in minutes at fraction of the cost and supports Yangra's future content needs.

SERVICES

Product Strategy

UI/UX Design

Technical Architecture

Custom AI Tooling

TECHNOLOGY

Nano-Banana

Cloudflare

React

Node

TypeScript

CLIENT

In a Nutshell

Challenge: Yangra, a Hinduism content app, needed 200+ custom illustrations across multiple content categories. Traditional commissioning would take months, cost significantly more, and couldn't scale as the content library grew.

Solution: We architected and built a multi-agent AI illustration pipeline - with componentized prompts, category-level generation rules, and full traceability - to generate hundreds of on-brand, high-quality illustrations that bring the app to life.

Results:

- 200+ on-brand illustrations delivered in weeks at a fraction of the cost of traditional commissioning

- 75% of illustrations publish-ready on first generation

- A long-term, user-friendly AI solution that scales with the app

Scaling 200+ Custom Illustrations for a Content App

Yangra needed over 200 custom illustrations to bring their Hinduism content to life - philosophy, practices, epics, and more. Through a traditional agency or freelance team, that volume would be costly and take months. And as content grew, Yangra would be back in the queue for every new batch.

The solution needed to be both cost-effective and high-quality - 200 illustrations that feel like they belong to the same visual system while being meaningfully different from each other. Varied in composition, color, and energy appropriate to each content category.

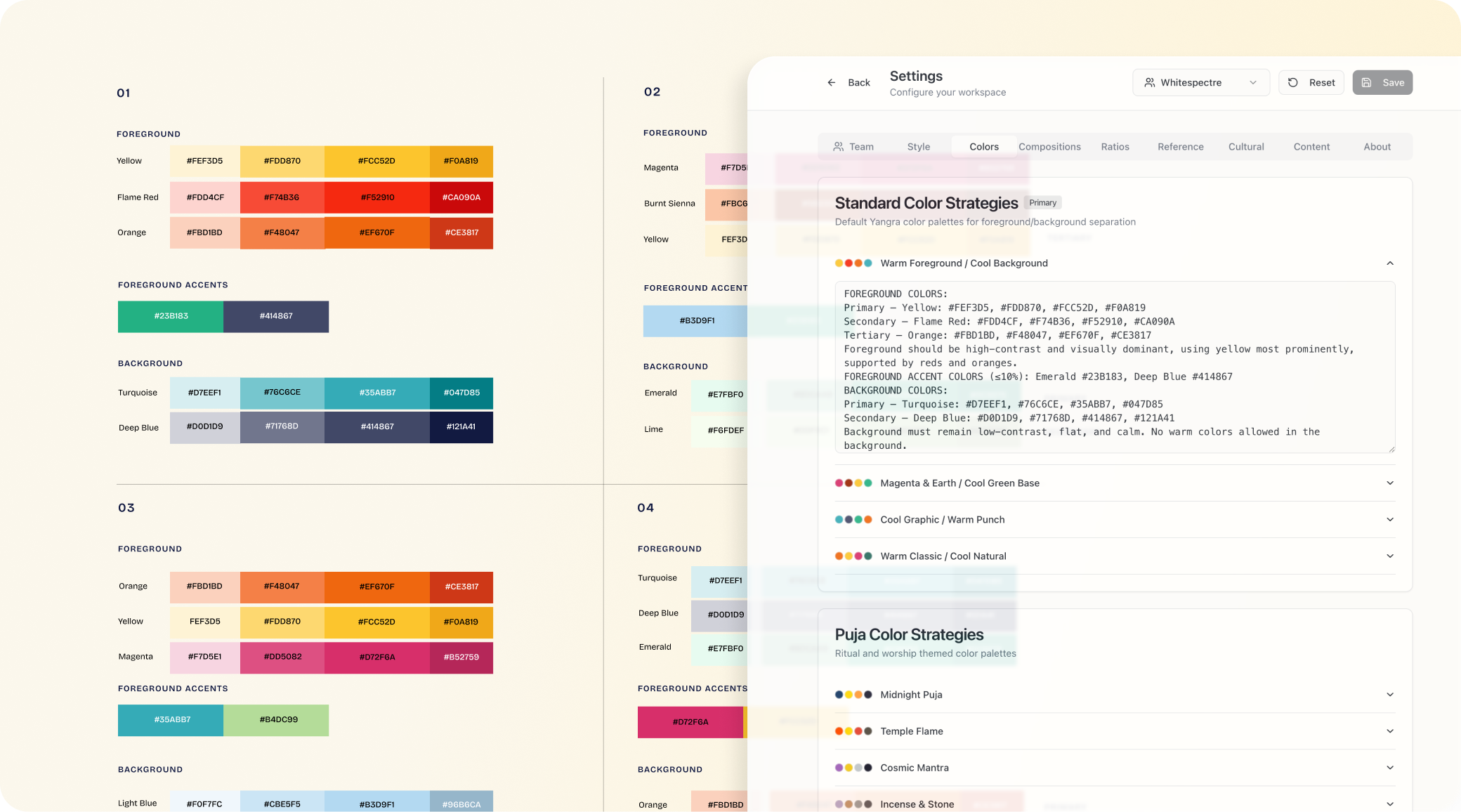

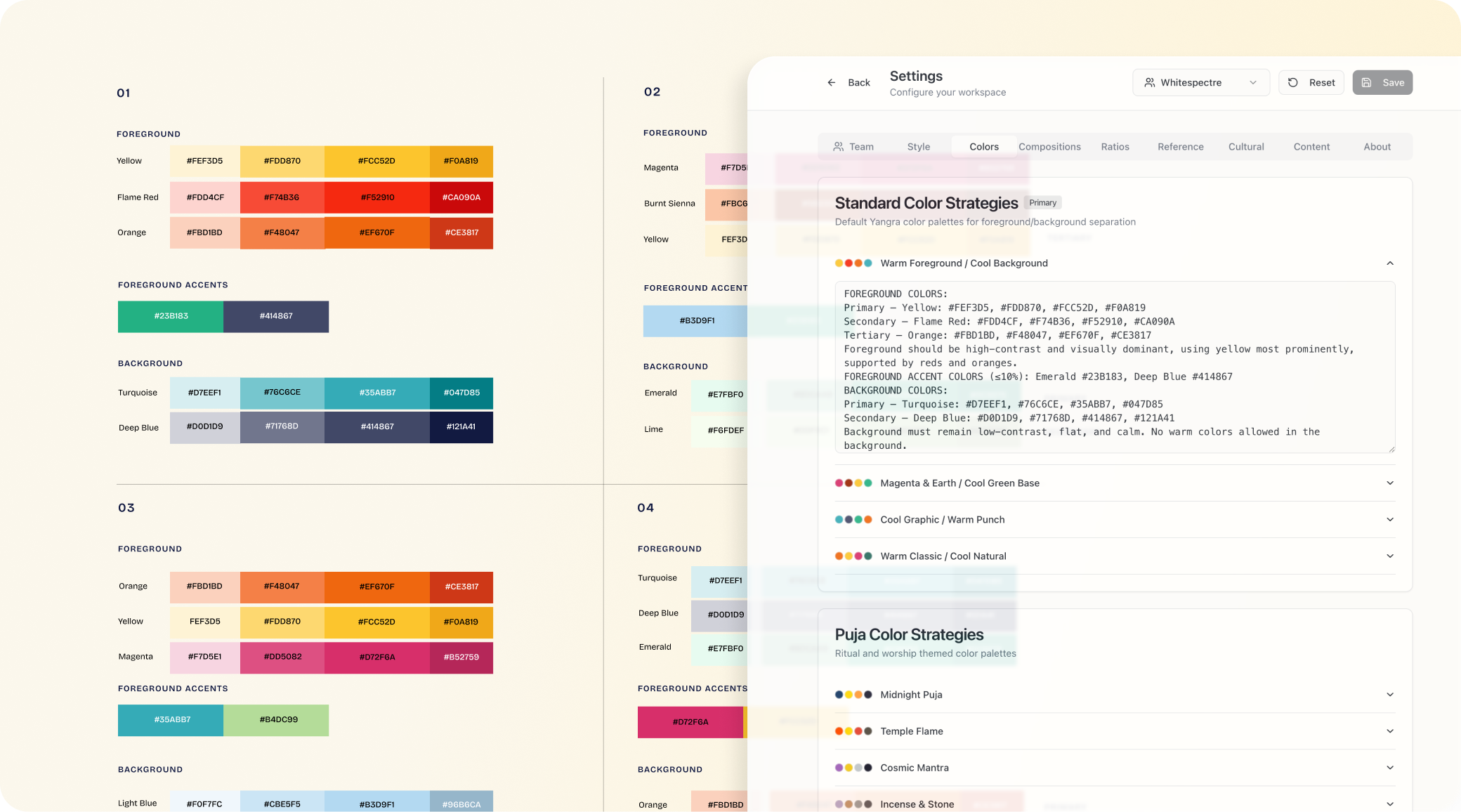

As Yangra's product and engineering partner, we'd already built their illustration style guide - defining the aesthetic, tonal contrast, color architecture, and compositional language. The next challenge was building the infrastructure to generate illustrations that reflected both the content and the visual system, at scale.

Multi-Agent AI Pipeline with Componentized Prompts

AI-generated content at scale is not a prompting problem. It's a systems engineering problem - discrete components, clear separation of areas, and full visibility into what's happening at each stage.

Multi-agent pipeline.

A concept agent reads Yangra's app content - titles, descriptions, articles - alongside category-specific rules, and generates a structured scene description. A separate output agent applies the style lock, color strategy, and composition rules to produce the illustration.

One model handling both interpretation and generation produced poor results and made failures impossible to isolate & solve. Separating them meant cleaner outputs, independent debugging, and no need for manual briefs per image.

Prompt componentization.

Rather than a monolithic prompt, the generation input is broken into discrete, independently tunable components: content description, style lock, composition rules, color strategy, and reference image sets. Each can be versioned, tested, and owned by a different team member.

Systematic variation.

Eight color strategies carved from the brand palette create visual differentiation without going off-brand. Five composition types and category-specific content templates ensure a movement scene conveys energy while a recovery scene conveys stillness. This is what prevents 200 illustrations from looking like 200 AI copies of the same image.

Traceability.

Every generated image maps back to its full prompt chain - every component, every variable, every reference image. Click any output, see exactly what produced it, identify which layer to adjust. Bulk generation enables systematic testing - compare ten images against ten to evaluate whether a change produced real improvement, not a one-off fluke.

75% First-Pass Accuracy Across 200+ AI-Generated Illustrations

Across client reviews, 75% of images were publish-ready on the first generation - a major win considering no manual prompting was involved. The remaining fixes were easily isolated: a hand position here, a concept that needed a regenerated image there. Quick surgical fixes or easy discards.

200+ production illustrations delivered in weeks at a fraction of traditional cost. When Yangra expands their content, they get beautiful on-brand illustrations in minutes, not days.

Yangra is now launching with a full library of illustrations that feel hand-crafted - and a system that keeps producing as their content grows.

A Reusable Architecture for Controlled AI Output at Scale

The architecture we built for Yangra - multi-agent pipeline, componentized prompts, category-level rules, full traceability - extends well beyond illustration.

Any workflow where AI output needs to be both controlled and variable at scale benefits from this approach:

- Separate interpretation from generation

- Make every component independently tunable

- Build visibility into every layer so a real team can operate and improve the system over time

This is a proven blueprint Whitespectre applies across other AI initiatives with our clients. The domain changes. The architecture holds.